Cloud Gravity And Cost Control Are Quietly Deciding Enterprise AI Platforms

The Case

By 2026, Robinhood had become a reference point for what “at scale” actually means in enterprise generative AI. The trading platform wanted AI throughout its stack: customer support, fraud detection sidecars, code assistants for engineers, and internal research tools. Prototypes spun up quickly on the direct OpenAI API, exploiting its low-friction keys and strong general-purpose models.

But as usage ramped from millions to billions of tokens per day, the limitations of that choice surfaced. Finance and security teams balked at running regulated workloads on an external SaaS API with limited data residency guarantees. FinOps teams saw an untagged, fast-rising line item that didn’t align with existing cloud cost controls. Engineering teams struggled with rate limits when they load-tested real production volumes.

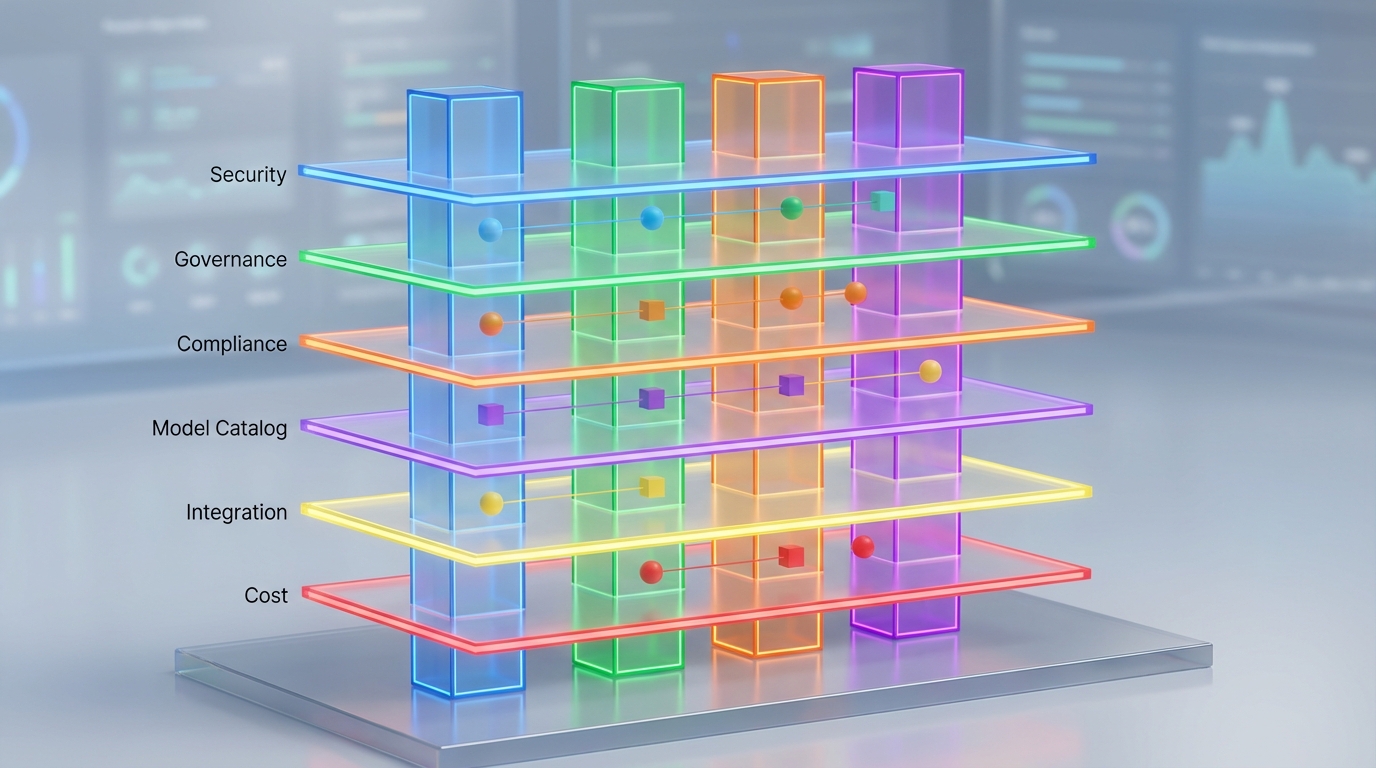

Platform leadership reframed the decision as a structured comparison: a “2026 enterprise AI platform decision framework: Vertex AI vs AWS Bedrock vs OpenAI vs OCI.” They scored each platform across a dozen dimensions – infrastructure fit, security and compliance, model performance, pricing, integration effort, FinOps visibility, and more – and weighted those scores by Robinhood’s constraints.

The picture that emerged was stark. More than 80% of Robinhood’s infrastructure spend was already on AWS. Moving sensitive trading data to Google Cloud or Oracle Cloud meant egress fees and duplicated security work. As one internal slide reportedly summarized the conclusion, “Score 10/10 for your primary cloud. Avoid OpenAI direct if >50% cloud spend elsewhere-lacks native storage/compute ties.” AWS Bedrock, plugged directly into existing IAM, VPCs, and CloudWatch, won easily on infrastructure alignment, governance, and cost observability.

Robinhood rolled out Bedrock with multi-model routing, mixing proprietary and open-weight models. By pulling usage into AWS’ native billing and provisioning system, they were able to scale toward 5B tokens per day while cutting marginal inference costs on the order of 80% compared to their initial OpenAI-heavy prototype phase. What looked like a technical model bake-off turned out, in retrospect, to be mostly a decision about cloud, compliance, and cost control.

The Pattern

The Robinhood story is not an outlier; it is a crisp instance of a general pattern. For large enterprises, generative AI platform choices in 2026 are shaped far more by existing cloud gravity and FinOps regimes than by head-to-head model benchmarks. The elaborate decision matrices – comparing Google Vertex AI, AWS Bedrock, direct OpenAI APIs, and Oracle OCI Generative AI across 10–12 dimensions – create the appearance of a greenfield choice. But once you look at how those dimensions are weighted, the outcome becomes highly predictable.

In most enterprises, three categories dominate the weighting: infrastructure and ecosystem fit, security and compliance posture, and cost predictability / FinOps integration. Together they often account for 60–70% of the total score. All three are tightly correlated with whichever cloud already owns the majority of the organization’s compute, storage, and data platforms. That makes the “winner” in a 2026 platform bake-off largely a function of prior cloud strategy.

AWS-centric organizations like Robinhood tend to land on Bedrock because it surfaces generative models – from Anthropic to Meta to Amazon’s own Titan family – behind the same IAM, PrivateLink, CloudWatch, and billing primitives they already trust. GCP-centric analytics organizations, whose nervous system runs through BigQuery, Dataflow, and Kubernetes, almost inevitably gravitate to Vertex AI, where Gemini models sit a network hop away from their data warehouse. Oracle-heavy finance and ERP environments choose OCI Generative AI largely to avoid data egress from core Oracle databases and to keep AI within existing governance envelopes.

The outlier in this pattern is direct OpenAI: powerful models, weak native integration. It thrives where institutional gravity is weak – in greenfield startups, isolated innovation labs, or narrow unregulated use cases. But as soon as production workloads, PHI/PII, or material budgets enter the picture, security and finance gatekeepers begin to demand that AI behave like any other cloud workload. That means region-level data residency, enterprise BAAs, customer-managed keys, VPC integration, and cost tags that can be joined to a cloud bill. Direct APIs built for consumer-scale experimentation tend to struggle under those expectations.

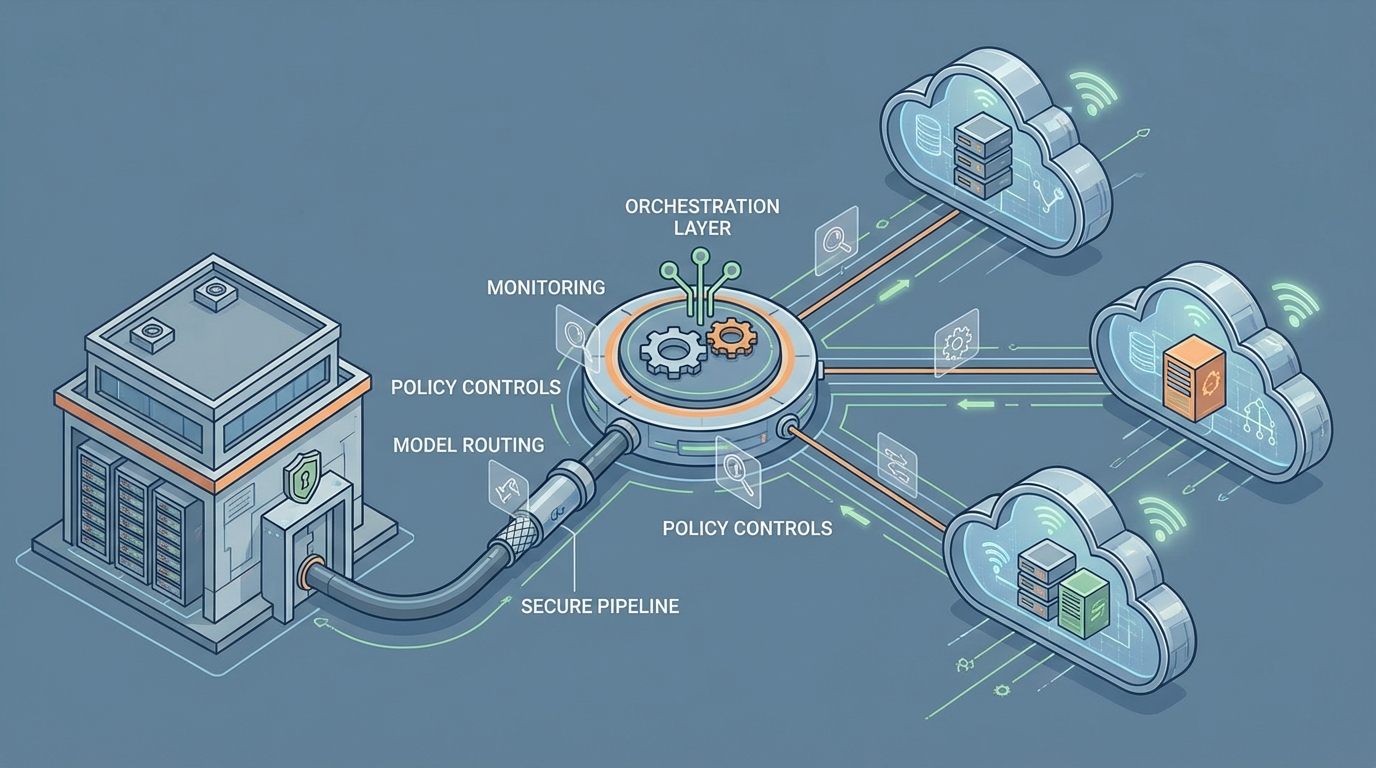

As this pattern repeats across sectors, the “AI platform war” quietly redefines itself. The visible battlefield is model quality – whose benchmark scores are higher, whose context window reaches 1M or even 2M tokens, whose reasoning benchmarks edge past rivals. The more decisive battlefield is control: whose cloud becomes the orchestration layer for a fleet of models, many of which are sourced from the same third-party providers. Bedrock, Vertex, and OCI are not only competing with OpenAI; they are also happily reselling its peers, betting that owning IAM, logging, routing, and billing around those models is where long-term power lies.

Robinhood’s internal heuristics capture the reality: “Score 10/10 for your primary cloud” is less a clever rule of thumb than an admission that the primary cloud usually wins by construction. The decision framework doesn’t overturn that gravity; it codifies it.

The Mechanics

Under the surface, a set of reinforcing incentives and feedback loops explain why cloud and cost gravity dominate decisions across Vertex AI, AWS Bedrock, OpenAI, and OCI.

Start with the hyperscalers’ incentives. Google, Amazon, and Oracle make their money on compute, storage, and managed services. Generative AI is a way to both protect and deepen that revenue: every AI call that runs “inside the cloud” drives GPU usage, database reads, logging, and networking. That’s why they bundle model access tightly with existing primitives – Vertex tying Gemini to BigQuery, Bedrock exposing models via IAM and VPC endpoints, OCI wiring LLMs directly to Oracle Database and Fusion apps. It’s also why they aggressively discount provisioned capacity, often marketing 50–70% savings versus on-demand rates if you commit to steady throughput.

On the enterprise side, platform, security, and FinOps teams are all trying to solve a different problem: how to add powerful but unpredictable AI workloads without blowing up risk or budgets. Every external API represents potential data exfiltration and a blind spot in cost reporting. Pulling AI inside their primary cloud’s perimeter lets them reuse mature machinery – IAM roles, private networking, DLP services, centralized logging, incident response playbooks – rather than inventing bespoke controls for each LLM provider.

FinOps adds a hard edge to this. Token-based pricing can span an order of magnitude or more: illustrative 2025–2026 snapshots show input costs ranging from roughly $0.0001 to $0.01 per token depending on model family, with output often several times higher. At volumes approaching billions of tokens per day, that variance dwarfs many traditional infrastructure line items. Platforms like Bedrock, Vertex, and OCI respond by exposing detailed per-request metrics, labels, and cost exports that slot into existing billing pipelines. That makes it possible to compute cost-per-query, allocate spend to teams, and enforce budgets – capabilities that basic SaaS dashboards rarely match.

Once an organization standardizes on a cloud-native AI platform, a familiar feedback loop kicks in:

- Internal platform teams wrap the chosen service in shared SDKs, CLIs, and “golden” templates.

- Security and compliance bake its assumptions into review checklists and approvals.

- FinOps bakes its meters and tags into showback/chargeback models.

- New projects find it easiest to adopt what already passes review and has a known cost model.

Each wave of adoption deepens the trench. Swapping in an alternative – even a technically superior one – now requires renegotiating vendor contracts, revisiting data residency analyses, retooling observability, and adjusting internal libraries. In that context, differences like “128k vs 1M vs 2M token context windows,” or a few percentage points on a benchmark suite, rarely justify the switching and coordination costs.

Direct model vendors live in the seams of this system. OpenAI, for example, excels at rapid model iteration and developer ergonomics, and many enterprises started their generative AI journey there. But over time, two things tend to happen. Either a cloud provider intermediates the relationship – offering “OpenAI-like” or partner models as a first-class managed service – or the enterprise itself builds a gateway on its primary cloud that fronts the external API with internal security and billing controls. In both paths, strategic control shifts from the model vendor to the cloud orchestrator.

Cost-optimization practices amplify these dynamics. Provisioned capacity commitments yield large discounts for predictable loads. Dynamic multi-model routing – sending simple queries to cheaper, smaller models and reserving expensive frontier models for hard cases – can reduce blended inference costs by 20–80%, as Robinhood’s reported 80% savings suggest. Prompt caching and retrieval-augmented generation further cut redundant token usage. But all of these techniques depend on tight integration between inference, telemetry, and billing. That’s exactly the integration a primary cloud provider can deliver most easily, and exactly what a stand-alone API struggles to match without ending up wrapped by someone else’s control plane.

The Implications

Seen through this lens, much of the 2026 landscape becomes predictable. Before anyone runs a benchmark, you can often guess the “winner” in an enterprise AI RFP by asking a few unglamorous questions: Which cloud holds the majority of current spend? Where do the core data warehouses and ERP systems live? Which vendor can sign the necessary BAAs and meet data sovereignty rules in required regions? The formal “2026 enterprise AI platform decision framework: Vertex AI vs AWS Bedrock vs OpenAI vs OCI” mostly makes that preexisting gravity legible.

It also suggests that, for most large organizations, the end state is not a sprawling best-of-breed mosaic but a dominant home platform with a curated multi-model roster. Multi-cloud AI will persist, but typically as a thin layer – a small set of specialized workloads on a secondary cloud for regulatory or partnership reasons, while the bulk of traffic and spend consolidates on the primary provider’s AI services. For independent model companies, the most viable route into these environments is likely via deep cloud partnerships and marketplace listings, rather than direct enterprise sales at scale.

Perhaps the most important implication is where this leaves internal teams. The critical capability is no longer picking a single “best” model; it is designing governance, routing, and FinOps patterns that make AI behave like the rest of the infrastructure. Organizations that internalize the mechanics of cloud and cost gravity will evaluate new models and platforms in terms of how easily they plug into the chosen control plane. Those that treat platform selection as a pure technical bake-off, ignoring procurement, compliance, and finance constraints, will continue to see their preferred choices overturned late in the process.

Understanding the pattern visible in cases like Robinhood’s lets technology leaders read the AI platform market more clearly. As long as hyperscalers control where enterprise data lives and how enterprise budgets are tracked, they will quietly shape which AI platforms — Vertex AI, AWS Bedrock, direct OpenAI, or OCI — are even viable contenders. Model quality will matter, but only within the boundaries those structures permit.

Leave a Reply